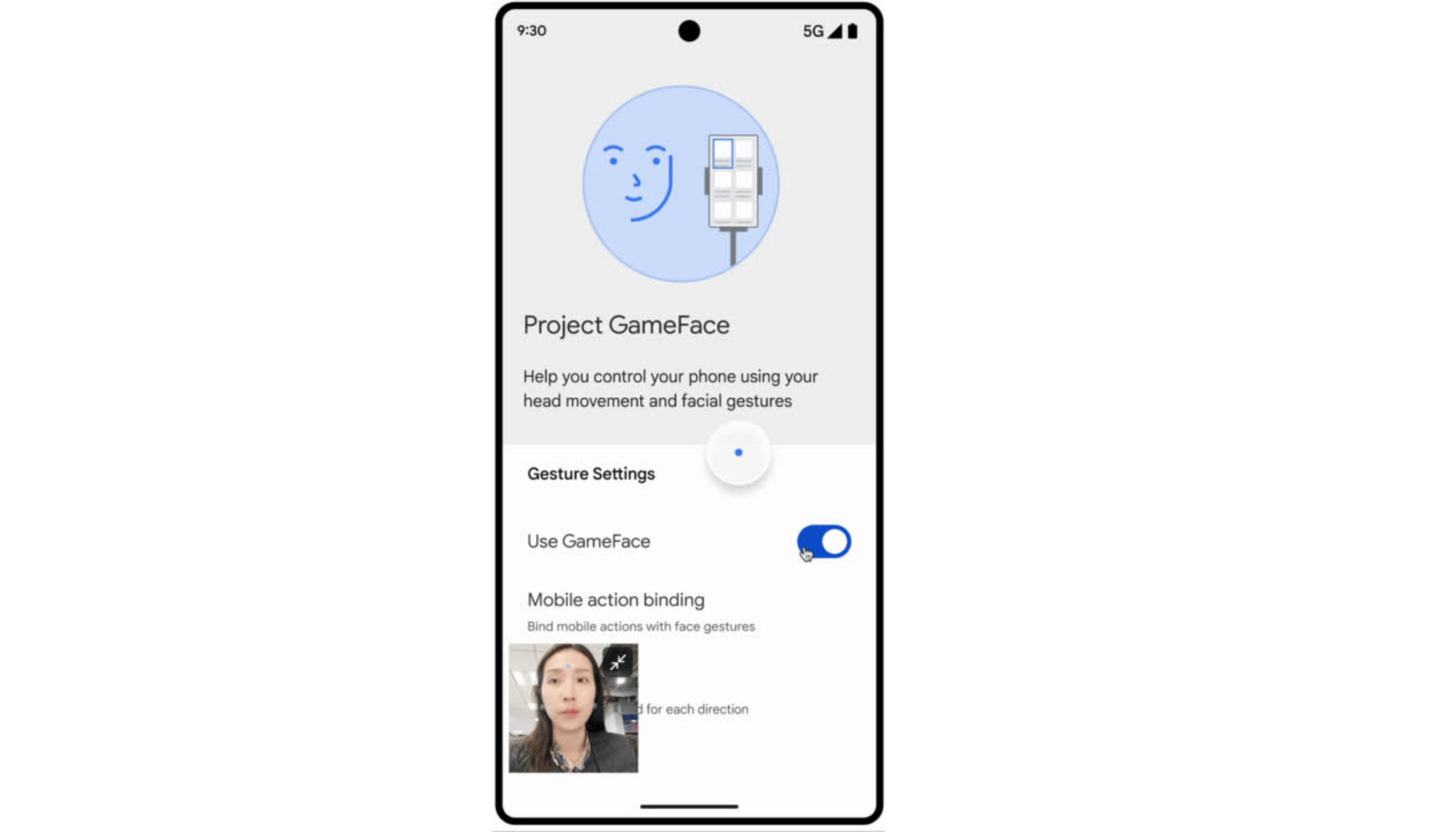

At I/O 2023, we launched Project Gameface, an open-source, hands-free gaming ‘mouse’ enabling people to control a computer’s cursor using their head movement and facial gestures. People can raise their eyebrows to click and drag, or open their mouth to move the cursor, making gaming more accessible.

The project was inspired by the story of quadriplegic video game streamer Lance Carr, who lives with muscular dystrophy, a progressive disease that weakens muscles. And we collaborated with Lance to bring Project Gameface to life. The full story behind the product is available on the Google Keyword blog.

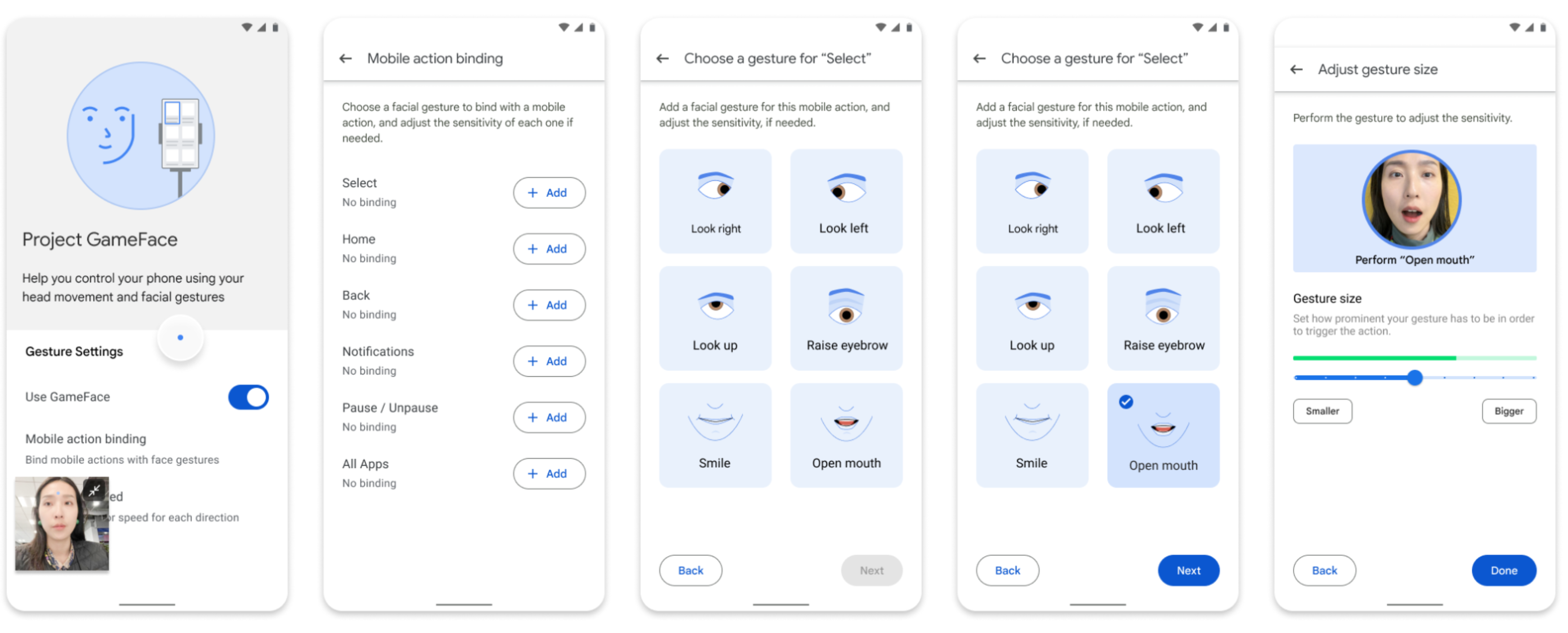

We’ve been delighted to see companies like playAbility utilize Project Gameface building blocks, like MediaPipe Blendshapes, in their inclusive software. Now, we’re open sourcing more code for Project Gameface to help developers build Android applications to make every Android device more accessible. Through the device's camera, it seamlessly tracks facial expressions and head movements, translating them into intuitive and personalized control. Developers can now build applications where their users can configure their experience by customizing facial expressions, gesture sizes, cursor speed, and more.

For this release, we collaborated with Incluzza, a social enterprise in India that supports people with disability, to learn how Project Gameface can be expanded to educational, work and other settings, like being able to type messages to family or searching for new jobs.

Link to Youtube Video (visible only when JS is disabled)

While building Project Gameface for Android, we based our product design and development on three core principles:

2. Build a cost-effective solution that’s generally available to enable scalable usage.

3. Leverage the learnings and guiding principles from the first Gameface launch to make the product user-friendly and customizable.

We are launching a novel way to start operating an Android device. Based on the positive feedback on Project Gameface, we realized that developers and users appreciated the idea of moving a cursor with head movement and taking actions through facial expressions.

We have replicated the same idea to bring a new virtual cursor on an Android device. We are using the Android accessibility service to create a new cursor and are leveraging MediaPipe’s Face Landmarks Detection API to program the cursor in a way so it moves according to a user’s head movement.

Within the API, there are 52 face blendshape values which represent the expressiveness of 52 facial gestures such as raising left eyebrow or mouth opening. We use some of these 52 values to effectively map and control a wide range of functions, offering users expanded possibilities for customization and manipulation. We are also leveraging blendshapes coefficients which gives developers an ability to set different thresholds on each specific expression and this helps them customize the experience.

In the Windows version for Project Gameface, we enabled users to replicate common click actions. However, in Android, there is a wider array of capabilities that users need to perform. There are touch events that are inputted into the OS and there are other global action events like “Go Back”, “Switch to Multitasking”, “Home”. We used the Android Accessibility API supported mobile actions to determine which actions could be provided to the user. Currently, Project Gameface for Android supports GLOBAL_ACTION_HOME, GLOBAL_ACTION_BACK, GLOBAL_ACTION_NOTIFICATIONS, GLOBAL_ACTION_ACCESSIBILITY_ALL_APPS

The camera feed significantly enhances the user experience, facilitating accurate threshold settings and a deeper comprehension of gestures. It also sends a clear signal to the user that their camera is being actively used to understand their head movements and gestures.

Just creating an overlay of camera feed would have prevented developers from accessing some important sections of their Android device like the Android settings. We use Android accessibility service with Project Gameface to enable the camera to continue floating even in Android settings and any other important sections of a user’s Android device.

The Android accessibility service currently lacks a straightforward method for users to perform real-time interactive screen dragging. However, our product has been upgraded to include drag functionality, allowing users to define both starting and ending points. Consequently, the drag action will be executed seamlessly along the specified path.

We’re excited to see the potential of Project Gameface and can’t wait for developers and enterprises to leverage it to build new experiences. The code for Gameface is now open sourced on Github.

We would like to acknowledge the invaluable contributions of the following people to Project GameFace for Android: Edwina Priest, Sisi Jin, KC Chung, Boon Panichprecha, Dome Seelapun, Kim Nomrak, Guide Pumithanon, Lance Carr, Communique Team (Meher Dabral,Samudra Sengupta), EnAble/Incluzza India (Shristi G, Vinaya C, Debashree Bhattacharya, Manju Sharma, Jeeja Ghosh, Sultana Banu, Sunetra Gupta, Ajay Balachandran , Karthik Chandrasekar