Kami sangat senang dapat membagikan Model Explorer - alat visualisasi grafik yang kuat. Ketika Anda mengembangkan model sendiri, mengonversi model antar format, mengoptimalkan untuk perangkat tertentu, atau melakukan proses debug performa dan kualitas, kemampuan untuk memvisualisasikan arsitektur model dan bagaimana data mengalir di antara node sangatlah berguna. Dengan visualisasi hierarkis yang intuitif bahkan untuk grafik terbesar sekalipun, Model Explorer memungkinkan developer mengatasi kompleksitas saat bekerja dengan model besar, terutama saat mengoptimalkan perangkat edge.

Ini adalah postingan blog ketiga dalam seri kami yang membahas rilis developer Google AI Edge: dua postingan pertama memperkenalkan AI Edge Torch dan Generative API yang memungkinkan model PyTorch dan LLM berkinerja tinggi di perangkat.

Awalnya dikembangkan sebagai aplikasi utilitas untuk peneliti dan engineer Google, Model Explorer kini tersedia untuk umum sebagai bagian dari rangkaian produk Google AI Edge. Versi awal Model Explorer menawarkan kemampuan berikut:

Dalam postingan blog ini, kita akan membahas cara memulai Model Explorer dan beberapa kasus penggunaannya. Dokumentasi dan contoh selengkapnya tersedia di sini.

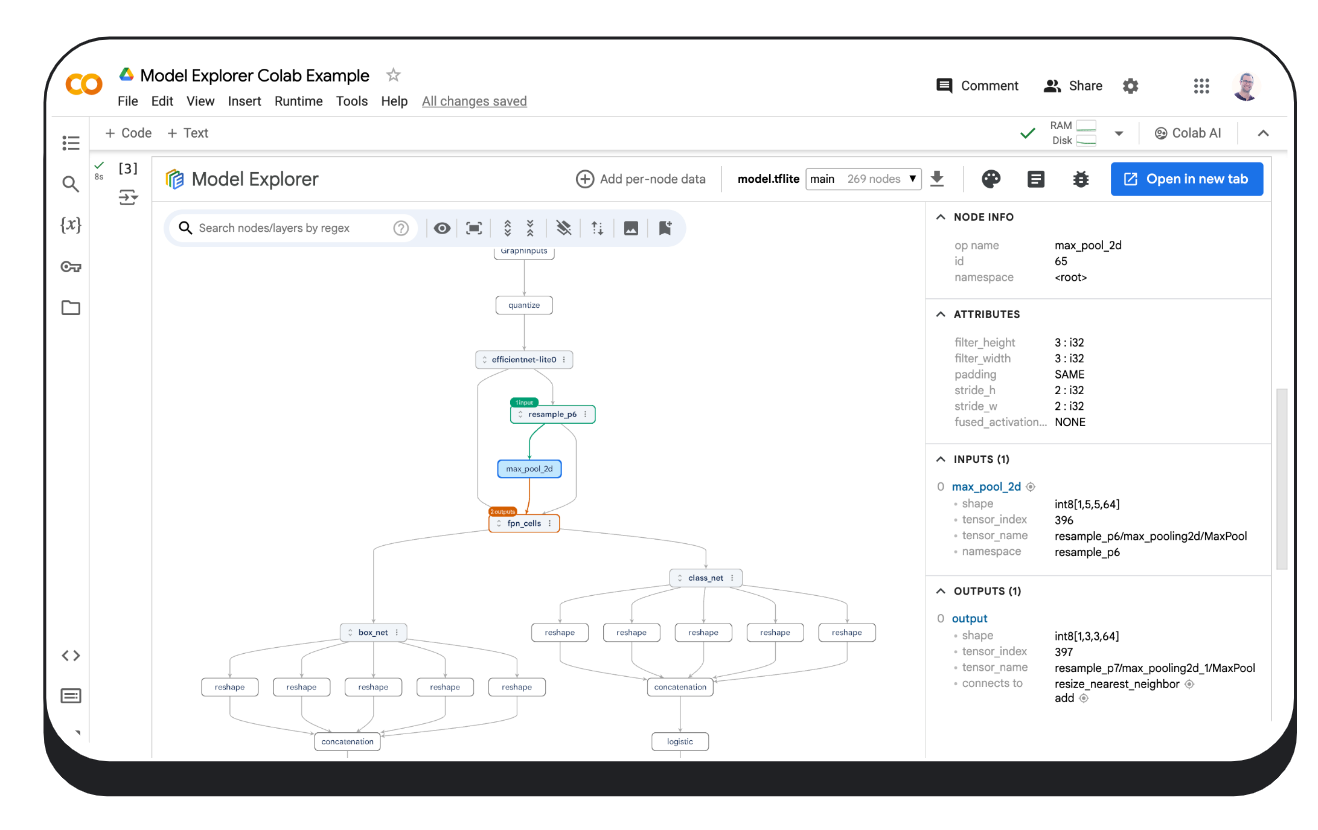

Paket PyPI Model Explorer yang mudah diinstal berjalan secara lokal di perangkat Anda, Colab, atau dalam file Python.

$ pip install ai-edge-model-explorer

$ model-explorer

Starting Model Explorer server at http://localhost:8080Perintah ini akan memulai server di localhost:8080 dan membuka aplikasi web Model Explorer di tab browser. Baca selengkapnya tentang penggunaan command line Model Explorer di panduan command line.

Setelah server localhost berjalan, upload file model dari komputer Anda (format yang didukung termasuk format yang digunakan oleh JAX, PyTorch, TensorFlow, dan TensorFlow Lite) dan, jika perlu, pilih adaptor terbaik untuk model Anda melalui menu tarik turun 'Adaptor'. Klik di sini untuk mempelajari cara memanfaatkan sistem ekstensi adaptor Model Explorer untuk memvisualisasikan format model yang tidak didukung.

# Download a model (this example uses an Efficientdet TFLite model)

import os

import tempfile

import urllib.request

tmp_path = tempfile.mkdtemp()

model_path = os.path.join(tmp_path, 'model.tflite')

urllib.request.urlretrieve("https://storage.googleapis.com/tfweb/model-graph-vis-v2-test-models/efficientdet.tflite", model_path)

# Install Model Explorer

pip install ai-edge-model-explorer

# Visualize the downloaded EfficientDet model

import model_explorer

model_explorer.visualize(model_path)Setelah menjalankan sel tersebut, Model Explorer akan ditampilkan dalam iFrame yang disematkan dalam sel baru. Di Chrome, UI juga akan menampilkan tombol "Open in new tab" yang bisa Anda klik untuk menampilkan UI di tab terpisah. Kunjungi ini untuk mempelajari lebih lanjut tentang cara menjalankan Model Explorer di Colab.

Paket model_explorer menyediakan API yang praktis sehingga Anda dapat memvisualisasikan model dari file atau modul PyTorch dan API level yang lebih rendah untuk memvisualisasikan model dari beberapa sumber. Pastikan untuk menginstalnya terlebih dahulu dengan mengikuti panduan penginstalan. Lihat panduan Model Explorer API untuk mempelajari lebih lanjut.

Di bawah ini adalah contoh cara memvisualisasikan model PyTorch. Memvisualisasikan model PyTorch membutuhkan pendekatan yang sedikit berbeda dengan format lain, karena PyTorch tidak memiliki format serialisasi standar. Model Explorer menawarkan API khusus untuk memvisualisasikan model PyTorch secara langsung, dengan menggunakan ExportedProgram dari torch.export.export.

import model_explorer

import torch

import torchvision

# Prepare a PyTorch model and its inputs

model = torchvision.models.mobilenet_v2().eval()

inputs = (torch.rand([1, 3, 224, 224]),)

ep = torch.export.export(model, inputs)

# Visualize

model_explorer.visualize_pytorch('mobilenet', exported_program=ep)Apa pun cara Anda memvisualisasikan model, di balik layar, Model Explorer mengimplementasikan rendering grafik yang dipercepat GPU dengan WebGL dan three.js yang menghasilkan pengalaman visualisasi 60 FPS yang mulus bahkan dengan grafik yang berisi puluhan ribu node. Jika Anda tertarik mempelajari lebih lanjut tentang cara Model Explorer merender grafik berukuran besar, Anda bisa membacanya di blog Tim Riset Google.

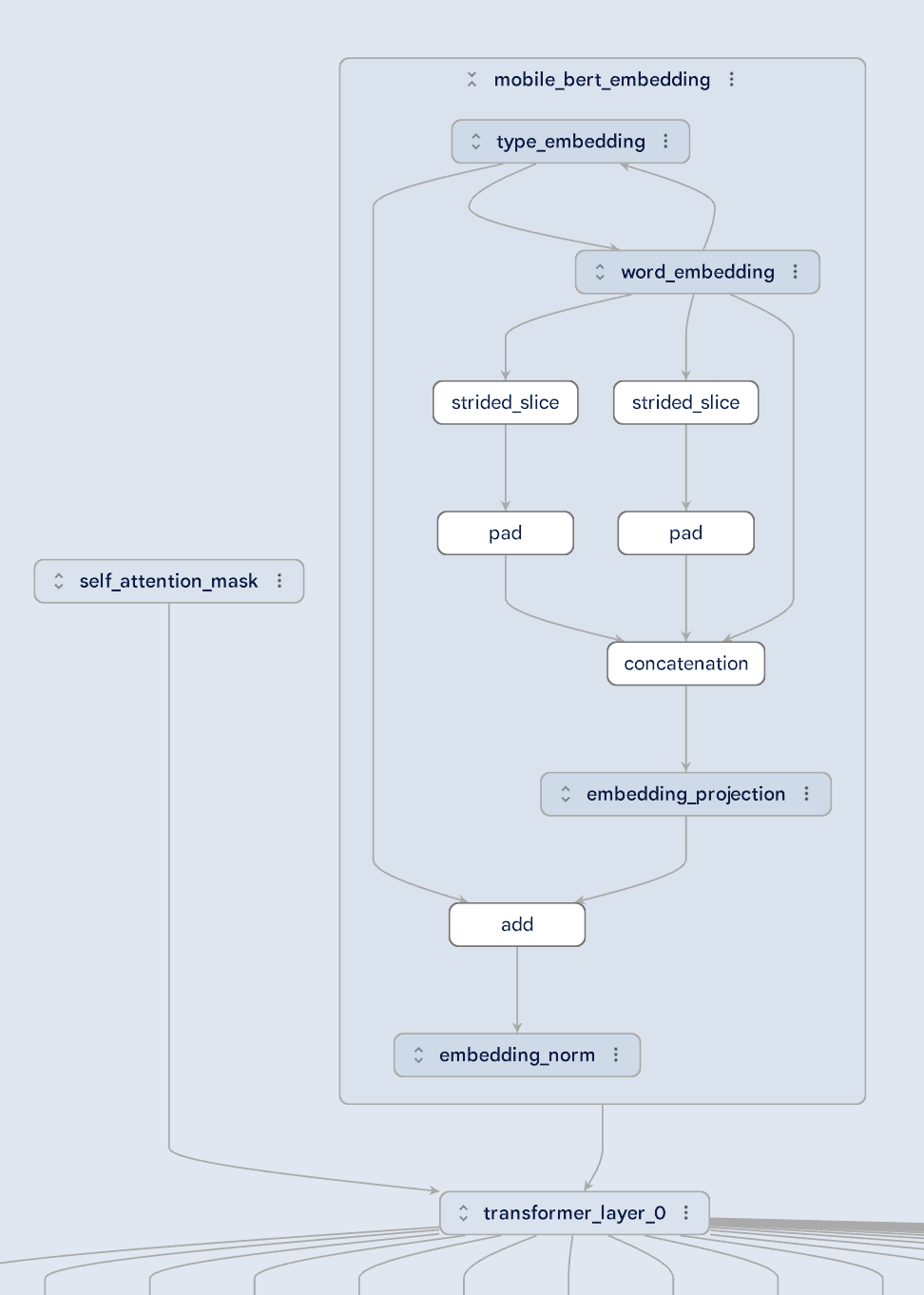

Model berukuran besar terkadang kompleks, tetapi Model Explorer membuatnya lebih mudah dipahami dengan memecah visualisasi menjadi beberapa lapisan hierarkis. Lihatlah model MobileBert yang digambarkan di bawah ini: terlihat jelas bagaimana self-attention mask dan embedding dimasukkan ke dalam transformer layer. Anda bahkan bisa terjun lebih dalam ke dalam lapisan sematan untuk memahami hubungan antara berbagai tipe sematan. Tampilan hierarkis Model Explorer membuat arsitektur model yang paling rumit sekalipun menjadi lebih mudah dipahami.

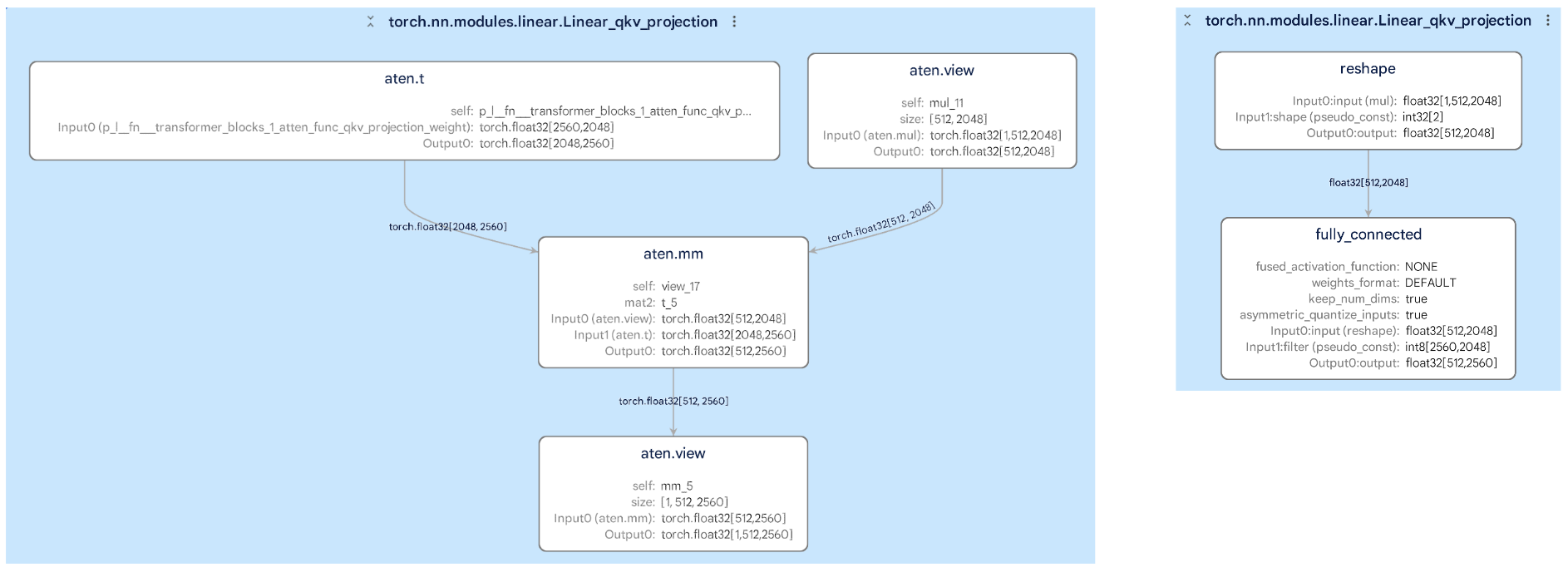

Mengonversi model dari satu format ke format lainnya (seperti PyTorch ke TFLite) tidaklah mudah, tetapi Model Explorer membantu Anda membandingkan grafik asli dan grafik yang sudah dikonversi secara berdampingan. Ini memudahkan Anda untuk mengetahui setiap perubahan yang mungkin memengaruhi performa model. Misalnya, pada gambar di bawah ini, Anda bisa melihat bagaimana subgraf di dalam lapisan telah berubah selama konversi, sehingga membantu Anda mengidentifikasi dan memperbaiki potensi error.

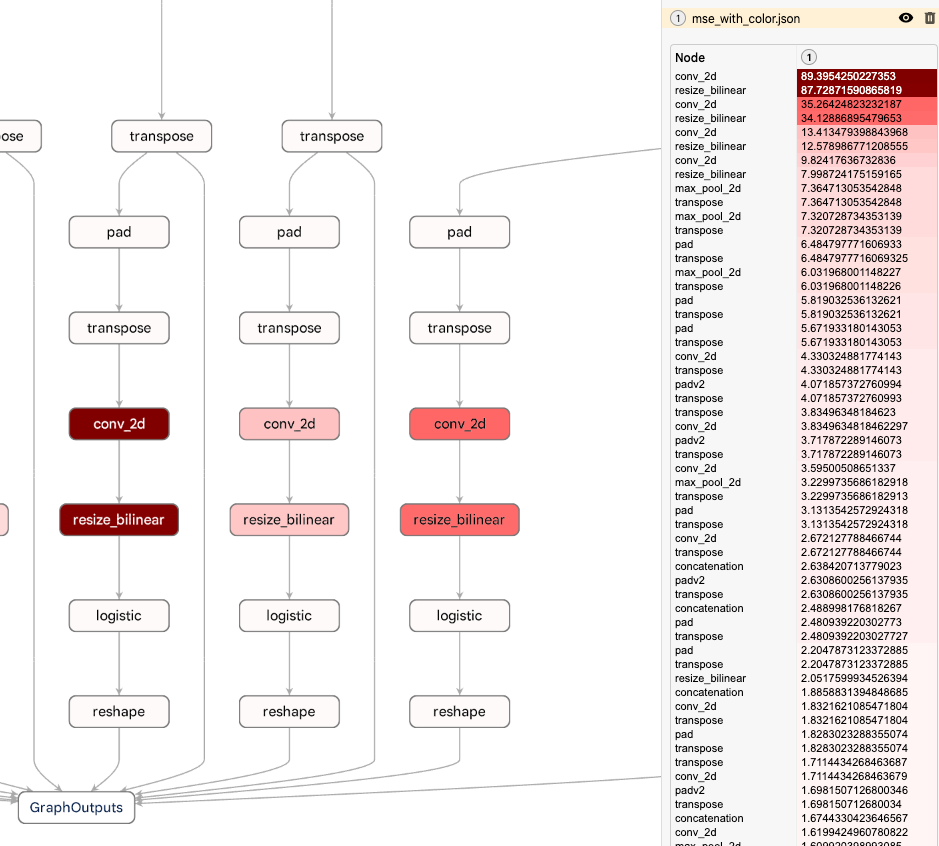

Fitur Model Explorer yang kuat adalah kemampuannya untuk menghamparkan data per node dalam grafik, sehingga Anda bisa mengurutkan, menelusuri, dan mengatur node dengan menggunakan nilai dalam data tersebut. Dikombinasikan dengan sistem tampilan hierarkis Model Explorer, fitur ini memungkinkan Anda menemukan bottleneck performa atau numerik dengan cepat. Contoh di bawah ini menunjukkan rata-rata kesalahan kuadrat pada setiap node antara model TFLite yang dikuantisasi dengan model floating point. Model Explorer menyoroti bahwa penurunan kualitas berada di dekat bagian bawah grafik, memberikan informasi yang Anda butuhkan untuk menyesuaikan metode kuantisasi. Untuk mempelajari lebih lanjut tentang bekerja dengan data khusus di Model Explorer, lihat dokumentasi lengkap kami di Github.

Di antara pengguna Model Explorer yang paling menonjol di Google adalah Waymo dan Google Silicon. Model Explorer memainkan peranan penting dalam membantu tim ini melakukan proses debug dan mengoptimalkan model di perangkat seperti Gemini Nano.

Kami melihat ini hanya sebagai permulaan. Dalam beberapa bulan mendatang, kami akan berfokus pada peningkatan intinya dengan menyempurnakan fitur UI utama, seperti pembedaan dan pengeditan grafik, serta meningkatkan ekstensibilitas dengan mengizinkan Anda mengintegrasikan alat Anda sendiri ke dalam Model Explorer.

Pekerjaan ini merupakan kolaborasi dari berbagai tim fungsional di Google. Kami ingin mengucapkan terima kasih kepada engineer Na Li, Jing Jin, Eric (Yijie) Yang, Akshat Sharma, Chi Zeng, Jacques Pienaar, Chun-nien Chan, Jun Jiang, Matthew Soulanille, Arian Arfaian, Majid Dadashi, Renjie Wu, Zichuan Wei, Advait Jain, Ram Iyengar, Matthias Grundmann, Cormac Brick, Ruofei Du, Technical Program Manager kami, Kristen Wright, dan Product Manager kami, Aaron Karp. Kami juga ingin mengucapkan terima kasih kepada tim UX, termasuk Zi Yuan, Anila Alexander, Elaine Thai, Joe Moran, dan Amber Heinbockel.