234 results

JUNE 10, 2025 / Pay

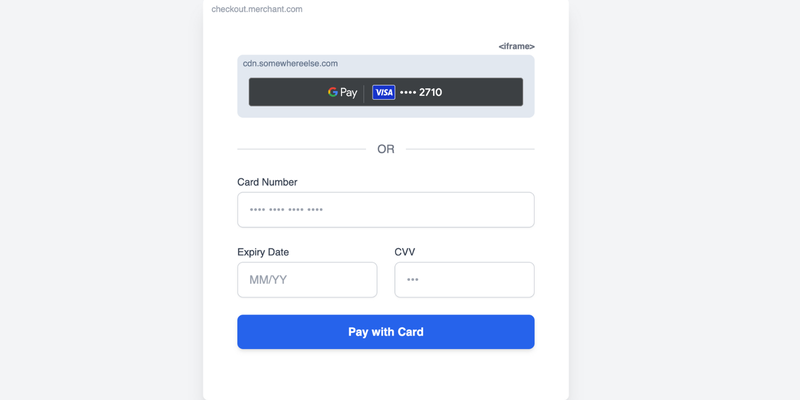

Use a sandboxed iframe to implement Google Pay on checkout pages, which helps comply with PCI DSS v4 requirements by isolating scripts. Shopify successfully implemented this method and passed the PCI DSS v4 audit.

MAY 29, 2025 / Cloud

Three developer-friendly MarTech solutions – sGTM Pantheon for data control, GA4 Dataform for data transformation, and FeedX for A/B testing shopping feeds – empower developers to leverage marketing data for insights, strategies, and better results.

MAY 28, 2025 / Android

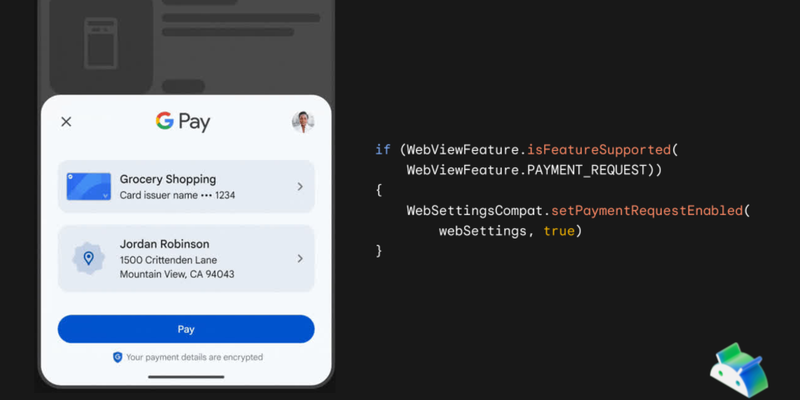

Google Pay support within Android WebView is now available, starting with WebView version 137 and Play Services 25.18.30, allowing users to utilize the native Google Pay payment sheet within embedded web checkout processes.

MAY 28, 2025 / Gemini

The Magic Mirror project utilizes the Gemini API, including the Live API, Function Calling, and Grounding with Google Search, to create an interactive and dynamic experience, demonstrating the power of the Gemini models to generate visuals, tell stories, and provide real-time information through a familiar object.

MAY 23, 2025 / Gemini

Announcing new features and models for the Gemini API, with the introduction of Gemini 2.5 Flash Preview with improved reasoning and efficiency, Gemini 2.5 Pro and Flash text-to-speech supporting multiple languages and speakers, and Gemini 2.5 Flash native audio dialog for conversational AI.

MAY 22, 2025 / Smart Home

Gemini intelligence is being integrated into Google Home APIs, offering developers access to over 750 million devices and enabling advanced features like AI-powered camera analysis and automated routines.

MAY 22, 2025 / Wallet

Google Wallet has expanded globally and introduced new features like digital IDs via a new Digital Credentials API, granular notifications for pass updates, and nearby pass notifications, along with other features like Value Added Opportunities and a Pass Upgrade experience.

MAY 21, 2025 / Pay

At Google I/O 2025, new Google Pay API updates were unveiled to enhance checkout experiences with features like Android WebViews integration, a more versatile API, and improved developer tools.

MAY 21, 2025 / Google AI Studio

Google AI Studio has been upgraded to enhance the developer experience, featuring native code generation with Gemini 2.5 Pro, agentic tools, and enhanced multimodal generation capabilities, plus new features like the Build tab, Live API, and improved tools for building sophisticated AI applications.

MAY 20, 2025 / Gemini

Google Colab is launching a reimagined AI-first version at Google I/O, featuring an agentic collaborator powered by Gemini 2.5 Flash with iterative querying capabilities, an upgraded Data Science Agent, effortless code transformation, and flexible interaction methods, aiming to significantly improve coding workflows.