A guest post by the XR Development team at KDDI & Alpha-U

Please note that the information, uses, and applications expressed in the below post are solely those of our guest author, KDDI.

VTubers, or virtual YouTubers, are online entertainers who use a virtual avatar generated using computer graphics. This digital trend originated in Japan in the mid-2010s, and has become an international online phenomenon. A majority of VTubers are English and Japanese-speaking YouTubers or live streamers who use avatar designs.

KDDI, a telecommunications operator in Japan with over 40 million customers, wanted to experiment with various technologies built on its 5G network but found that getting accurate movements and human-like facial expressions in real-time was challenging.

Announced at Google I/O 2023 in May, the MediaPipe Face Landmarker solution detects facial landmarks and outputs blendshape scores to render a 3D face model that matches the user. With the MediaPipe Face Landmarker solution, KDDI and the Google Partner Innovation team successfully brought realism to their avatars.

Using Mediapipe's powerful and efficient Python package, KDDI developers were able to detect the performer’s facial features and extract 52 blendshapes in real-time.

import mediapipe as mp

from mediapipe.tasks import python as mp_python

MP_TASK_FILE = "face_landmarker_with_blendshapes.task"

class FaceMeshDetector:

def __init__(self):

with open(MP_TASK_FILE, mode="rb") as f:

f_buffer = f.read()

base_options = mp_python.BaseOptions(model_asset_buffer=f_buffer)

options = mp_python.vision.FaceLandmarkerOptions(

base_options=base_options,

output_face_blendshapes=True,

output_facial_transformation_matrixes=True,

running_mode=mp.tasks.vision.RunningMode.LIVE_STREAM,

num_faces=1,

result_callback=self.mp_callback)

self.model = mp_python.vision.FaceLandmarker.create_from_options(

options)

self.landmarks = None

self.blendshapes = None

self.latest_time_ms = 0

def mp_callback(self, mp_result, output_image, timestamp_ms: int):

if len(mp_result.face_landmarks) >= 1 and len(

mp_result.face_blendshapes) >= 1:

self.landmarks = mp_result.face_landmarks[0]

self.blendshapes = [b.score for b in mp_result.face_blendshapes[0]]

def update(self, frame):

t_ms = int(time.time() * 1000)

if t_ms <= self.latest_time_ms:

return

frame_mp = mp.Image(image_format=mp.ImageFormat.SRGB, data=frame)

self.model.detect_async(frame_mp, t_ms)

self.latest_time_ms = t_ms

def get_results(self):

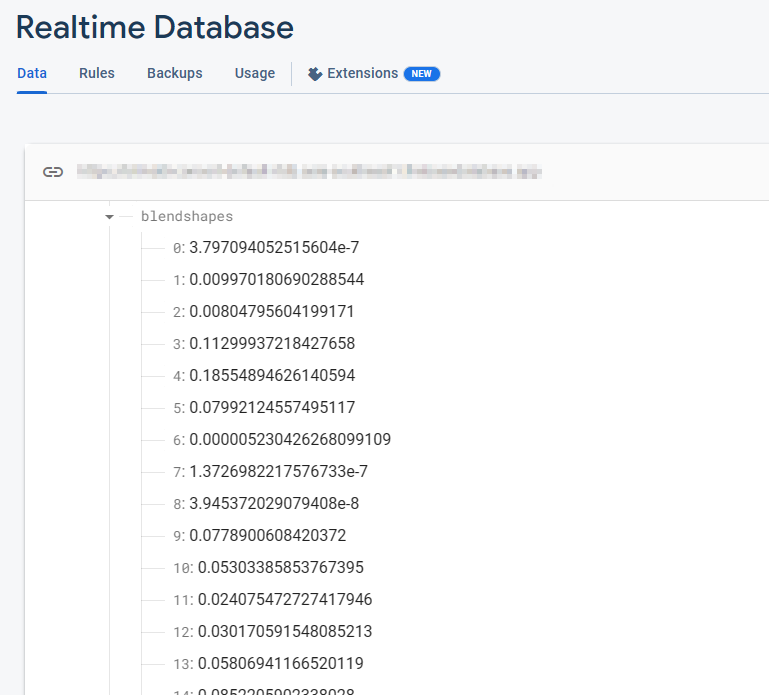

return self.landmarks, self.blendshapesThe Firebase Realtime Database stores a collection of 52 blendshape float values. Each row corresponds to a specific blendshape, listed in order.

_neutral,

browDownLeft,

browDownRight,

browInnerUp,

browOuterUpLeft,

...These blendshape values are continuously updated in real-time as the camera is open and the FaceMesh model is running. With each frame, the database reflects the latest blendshape values, capturing the dynamic changes in facial expressions as detected by the FaceMesh model.

After extracting the blendshapes data, the next step involves transmitting it to the Firebase Realtime Database. Leveraging this advanced database system ensures a seamless flow of real-time data to the clients, eliminating concerns about server scalability and enabling KDDI to focus on delivering a streamlined user experience.

import concurrent.futures

import time

import cv2

import firebase_admin

import mediapipe as mp

import numpy as np

from firebase_admin import credentials, db

pool = concurrent.futures.ThreadPoolExecutor(max_workers=4)

cred = credentials.Certificate('your-certificate.json')

firebase_admin.initialize_app(

cred, {

'databaseURL': 'https://your-project.firebasedatabase.app/'

})

ref = db.reference('projects/1234/blendshapes')

def main():

facemesh_detector = FaceMeshDetector()

cap = cv2.VideoCapture(0)

while True:

ret, frame = cap.read()

facemesh_detector.update(frame)

landmarks, blendshapes = facemesh_detector.get_results()

if (landmarks is None) or (blendshapes is None):

continue

blendshapes_dict = {k: v for k, v in enumerate(blendshapes)}

exe = pool.submit(ref.set, blendshapes_dict)

cv2.imshow('frame', frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

cv2.destroyAllWindows()

exit()To continue the progress, developers seamlessly transmit the blendshapes data from the Firebase Realtime Database to Google Cloud's Immersive Stream for XR instances in real-time. Google Cloud’s Immersive Stream for XR is a managed service that runs Unreal Engine project in the cloud, renders and streams immersive photorealistic 3D and Augmented Reality (AR) experiences to smartphones and browsers in real time.

This integration enables KDDI to drive character face animation and achieve real-time streaming of facial animation with minimal latency, ensuring an immersive user experience.

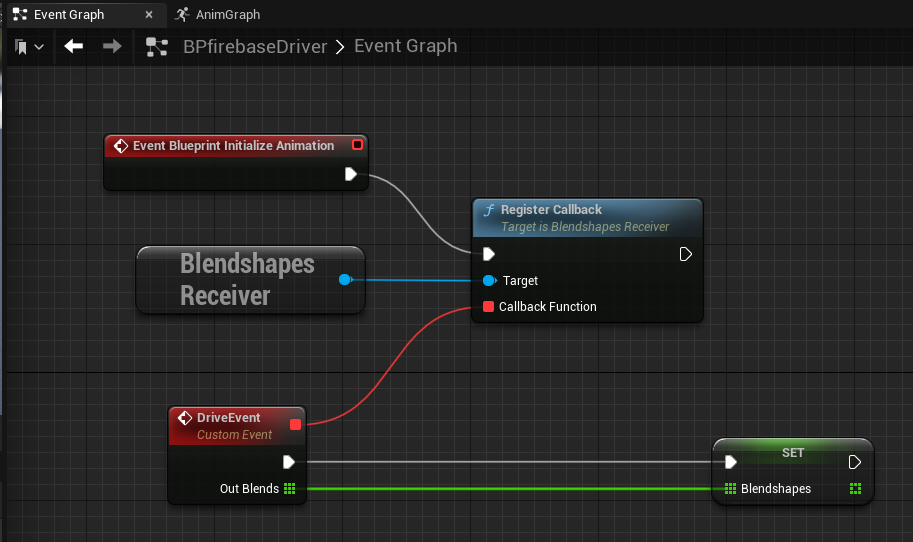

On the Unreal Engine side running by the Immersive Stream for XR, we use the Firebase C++ SDK to seamlessly receive data from the Firebase. By establishing a database listener, we can instantly retrieve blendshape values as soon as updates occur in the Firebase Realtime database table. This integration allows for real-time access to the latest blendshape data, enabling dynamic and responsive facial animation in Unreal Engine projects.

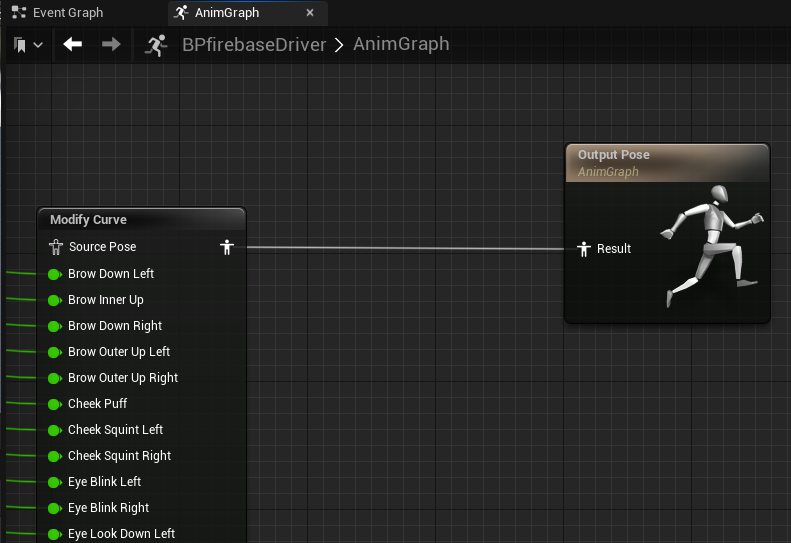

After retrieving blendshape values from the Firebase SDK, we can drive the face animation in Unreal Engine by using the "Modify Curve" node in the animation blueprint. Each blendshape value is assigned to the character individually on every frame, allowing for precise and real-time control over the character's facial expressions.

An effective approach for implementing a realtime database listener in Unreal Engine is to utilize the GameInstance Subsystem, which serves as an alternative singleton pattern. This allows for the creation of a dedicated BlendshapesReceiver instance responsible for handling the database connection, authentication, and continuous data reception in the background.

By leveraging the GameInstance Subsystem, the BlendshapesReceiver instance can be instantiated and maintained throughout the lifespan of the game session. This ensures a persistent database connection while the animation blueprint reads and drives the face animation using the received blendshape data.

Using just a local PC running MediaPipe, KDDI succeeded in capturing the real performer’s facial expression and movement, and created high-quality 3D re-target animation in real time.

KDDI is collaborating with developers of Metaverse anime fashion like Adastria Co., Ltd.

To learn more, watch Google I/O 2023 sessions: Easy on-device ML with MediaPipe, Supercharge your web app with machine learning and MediaPipe, What's new in machine learning, and check out the official documentation over on developers.google.com/mediapipe.

This MediaPipe integration is one example of how KDDI is eliminating the boundary between the real and virtual worlds, allowing users to enjoy everyday experiences such as attending live music performances, enjoying art, having conversations with friends, and shopping―anytime, anywhere.

KDDI’s αU provides services for the Web3 era, including the metaverse, live streaming, and virtual shopping, shaping an ecosystem where anyone can become a creator, supporting the new generation of users who effortlessly move between the real and virtual worlds.