What happens when two phones stop being rivals and start being friends? You get the world’s friendliest competitive campaign: Best Phones Forever. Across 17 episodes, this series has taken the phones on a range of adventures and built a loyal audience of fans.

Engaging directly with that fan community has always been part of the Best Phones Forever playbook. For last year’s series launch, our team trained a LLM on the tone of the campaign to help community managers generate friendship-themed responses to thousands of comments. And with rapid advancements in generative technology, we saw an opportunity to take that spirit of real-time engagement at scale even further.

Enter Best Phones Forever: AI Roadtrip — our first experiment in using generative AI to put fans in the driver's seat and bring these characters to life.

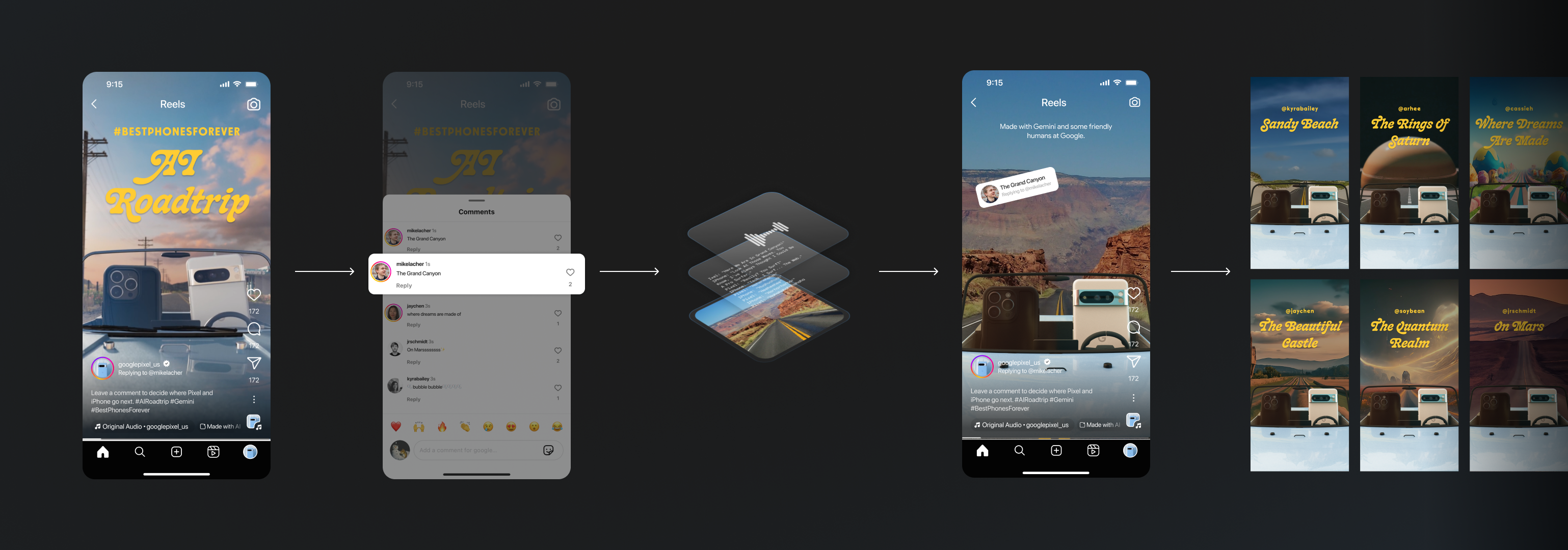

Here’s how it works: An episode on Instagram Reels explains that the two characters are going on a road trip powered by AI. When a fan comments with a location idea, our team uses a purpose-built tool to generate a custom video response within minutes. Over 16 hours, we plan to create as many unique replies as possible.

Working with our partners The Mill and Left Field Labs, we used a stack of Google AI models to design a tool that balances machine efficiency with human ingenuity. We’re hoping some of our takeaways inspire you to explore your own creative applications of these technologies.

To see the activation in action, visit @googlepixel_us on Instagram

After a user comments a suggested location, we take that location – for example, “the Grand Canyon” – and enter it into our generation engine to produce customized assets:

Our creative team is in the loop at each step, selecting, editing, reviewing, and occasionally re-prompting to make sure every video feels like it’s truly part of the Best Phones Forever universe.

We needed Gemini to reliably produce scripts in the voice of the campaign, with the correct characters, length, formatting, and style, while also being entertaining and true to whatever location a user suggested.

We found the most effective way to do this wasn’t with lengthy directions, but by providing numerous examples in the prompt. Our writers created short scripts about Pixel and iPhone in different locations and the kinds of conversation they might have in each place.

Feeding these into Gemini as part of the system prompt accomplished two things. First, it set in place the desired length and structure of our generated scripts, with each phone taking a turn in a 4-6-line format. Second, it conditioned the model to output the kinds of dialogue we wanted to hear in these videos (observations about the location, phone-related humor, friendly banter, and more than a few dad jokes).

We designed this prompt to work as a co-writer with human writers, so an important consideration was making sure Gemini would produce a wide range of scripts that focused on different aspects of a location and take different approaches to the conversation between Pixel and iPhone. That way, our human writers could select from a variety of scripts to either choose the one that worked best, make edits, or combine scripts.

To ensure this breadth of responses, we had Gemini write scripts conversationally. After Gemini produced one script, we asked it to produce a different one, and then a different one, and so on, all in the context of a single conversation. That way, it could see the scripts that were previously generated and make sure the new ones covered new ground — giving the human curators a wide range of options.

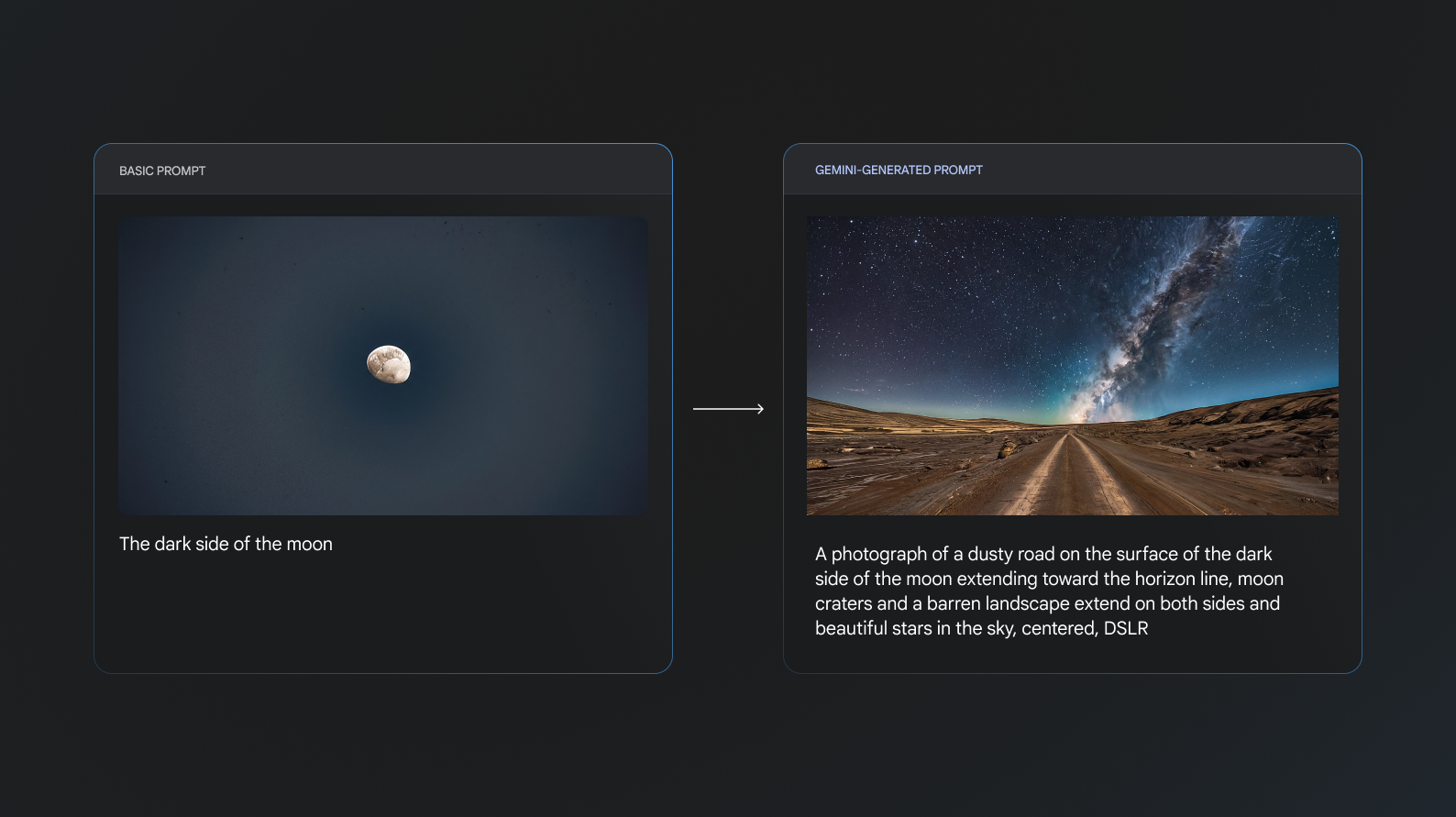

We used Imagen 2 to provide the image generation for our backgrounds. As Google’s latest generally available model, it gave our team the ability to generate the wide variety of locations and styles that this campaign required, with powerful natural-language controls to help us tune each output.

We wanted Imagen to create backgrounds for all kinds of locations, but we also wanted the backgrounds to be compositionally similar to accommodate Pixel and iPhone driving in the foreground.

Simply prompting the model with the location like “Paris” or “the dark side of the moon” would yield images that looked like the locations, but were inconsistent both stylistically and compositionally. Some would be too zoomed out, some would be black and white, and some wouldn’t have any area on which Pixel and iPhone could “drive.”

Adding additional instructions could help generate better images, but we found tailoring that language to each location was manual and time-consuming. That’s why we decided to use Gemini to generate the image prompts. After a human writer inputs a location, Gemini creates a prompt for that location based on a number of sample prompts written by humans. That prompt is then sent to Imagen, which generates the image.

We found using AI-generated prompts yielded images that were both more compositionally consistent and also more visually interesting. The background of our videos aren’t just static assets, though; once they’re ingested into Unreal Engine, they become a crucial part of the scene – more on that in the section below.

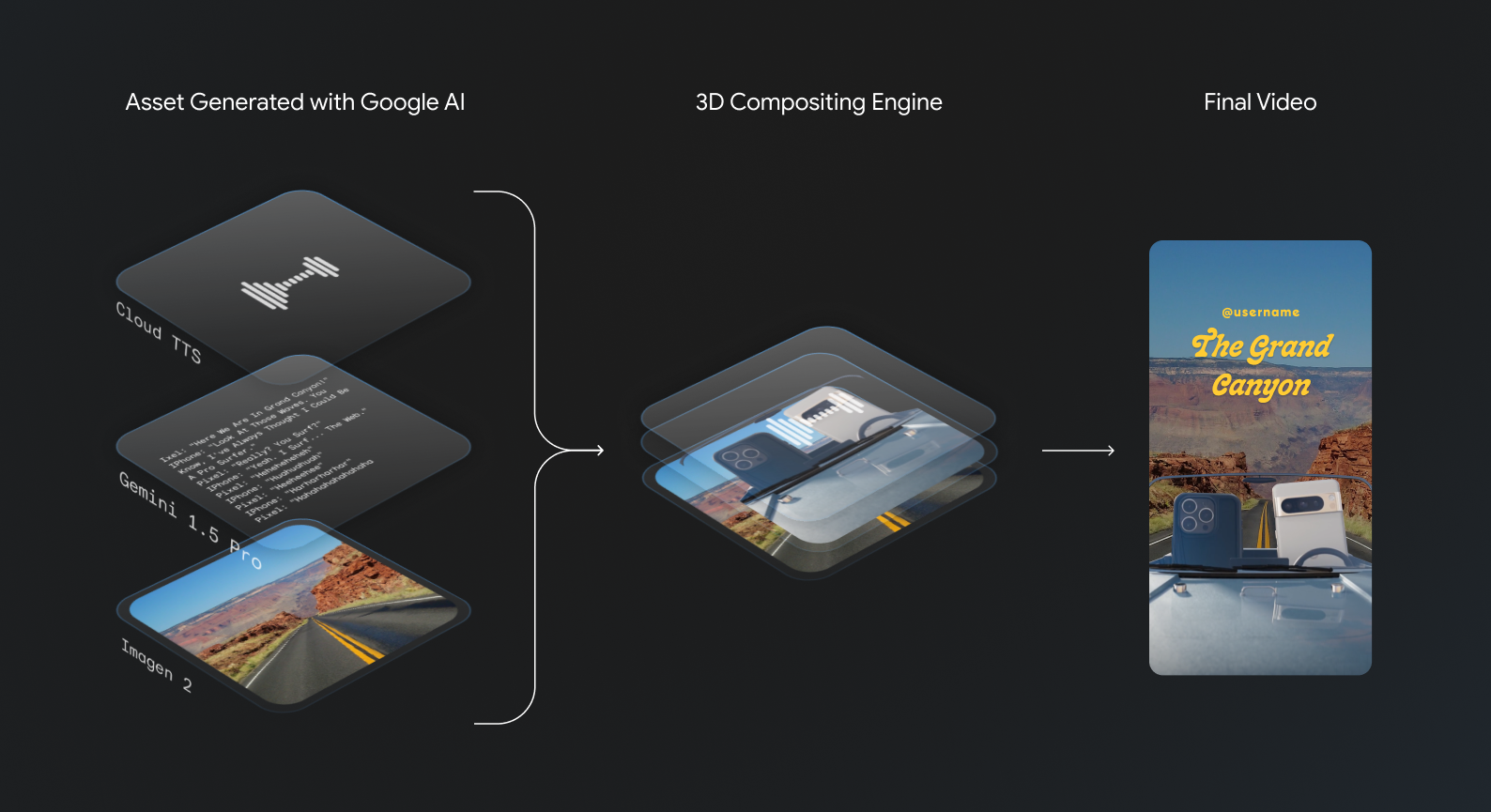

After we finalize the scripts, we send each line to Cloud Text-to-Speech to generate the audio. This is the same process we’ve used for all of the character voices in the Best Phones Forever campaign.

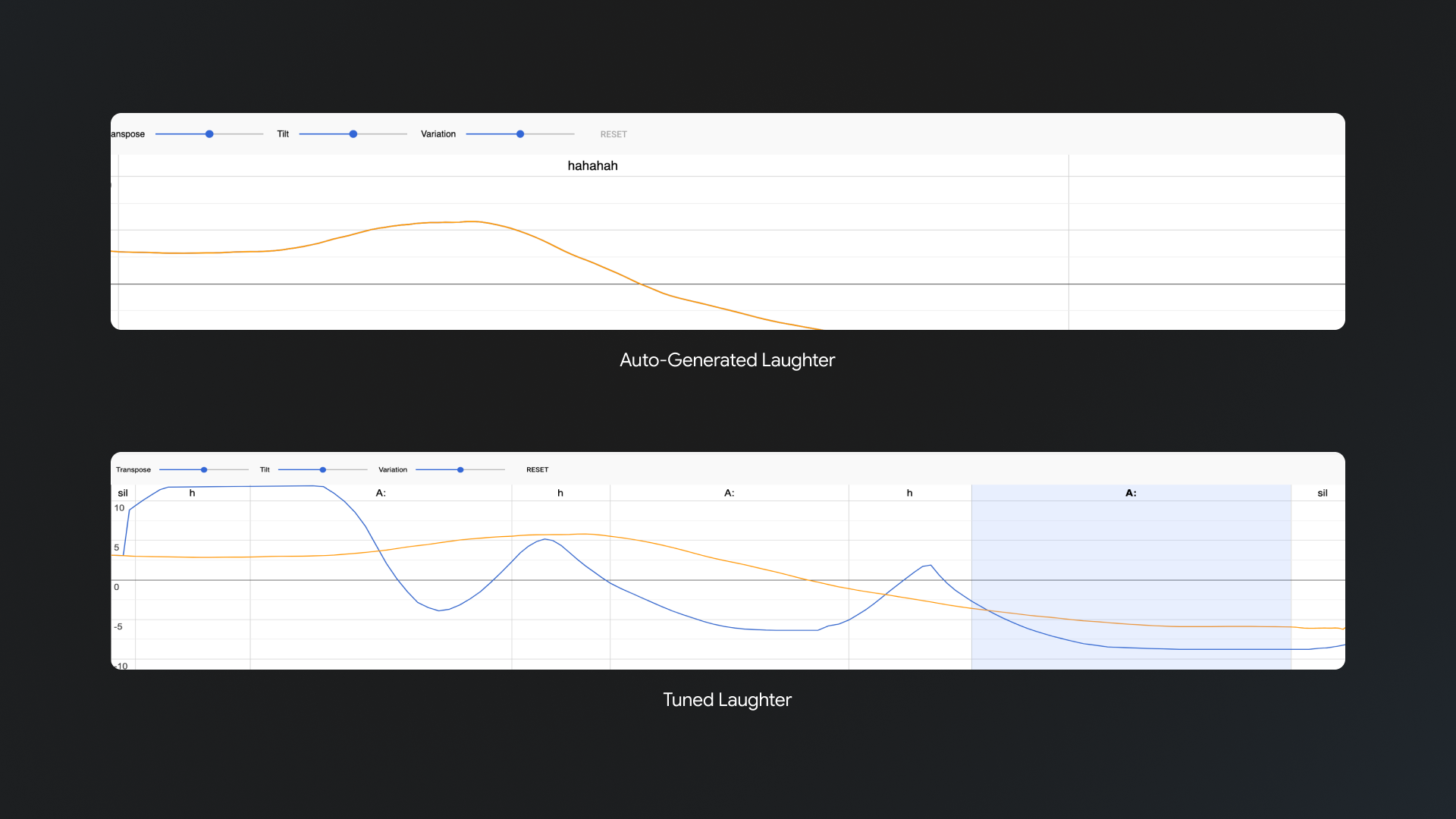

While we lean on Cloud TTS to synthesize high-fidelity, natural-sounding speech, our voices for Pixel and iPhone have their own characteristics. Here, we haven’t found an AI model that can really help our creatives to hit the specific timbre and cadence we want. Instead, we use internal tooling to add emphasis and inflections to really bring our characters to life.

Some videos also have ambient audio beneath the dialogue. We use a mix of composed sound effects, field recordings, and, of course, AI-generated audio with MusicFX to create soundscapes for the location and add an extra touch of realism.

Once all of the constituent assets are produced, they automatically populate a render queue to be ingested by Unreal Engine and composited into a 3D scene with iPhone, Pixel, and the car.

The background image wraps around the rear and sides of the scene, providing not just the background for the straight-on shots of the phones and the car, but the angled perspectives we see when the camera moves to highlight one character speaking. Parts of the background are captured in the reflections on the car hood and even the glass of the phones’ cameras, while the sky above interacts with the lighting of the scene to add even more detail and realism.

Our nonlinear animation editor allows our creatives to add motion to each individual phone in all of our camera positions. For instance, if a phone asks a question, they may orient towards the other phone, rather than looking out the window or through the windshield, leaning and tilting in a tentative way. Statements, jokes, agreement, and surprise all of their own unique animations, and we seamlessly interpolate between all of them and our rest state.

Finally, our creatives can turn on the dynamic elements and textures that really personalize each video – like mud splatter on the hood for rustic locations and a variety of hats for (most) weather conditions. Some locations might also merit a total transformation of the car, from trusty rover to submarine or spaceship.

Creatives can preview their video's VO, camera cuts, and primary animations before hitting render. Once they’re ready, all of the render jobs are dispatched across 15 virtual machines on Google Cloud Compute. From start to finish, a short video can be generated in as little as 10 minutes, including render time.

Using generative AI for creative development and production isn't a new idea. But we're excited to have built an application that stacks together Google's latest, production-ready models in a novel way, that takes an idea to real-time delivery at scale.

A typical Best Phones Forever video takes weeks to write, animate, and render. With this tool, our creatives hope to generate hundreds of custom mini-episodes in a single day — all inspired by the imagination of the Pixel community on social.

We hope that this experiment gives you a glimpse of what’s possible using the Gemini and Imagen APIs, whatever your creative destination may be.