Last year, we put some of the sharpest developers in a room, gave them brutal time constraints, and challenged them to build fully autonomous AI agents capable of solving massive, real-world industry problems.

That was the premise of the Google Cloud AI Agent Bake-Off - raw and unfiltered reality of building agentic applications under pressure. We watched teams tackle e-commerce returns, modernize legacy banking systems, and fully automate startup Go-To-Market strategies.

Watching these teams win—and occasionally crash and burn live on the clock—fundamentally changed how we think. The biggest takeaway? The honeymoon phase of simply chatting with an LLM is over. Moving from a cool demo to a production-ready application isn't about better prompt engineering anymore. It’s about rigorous agentic engineering. It's about multi-agent architecture, state management, and deterministic guardrails.

Whether you are scaling a startup or modernizing enterprise infrastructure, we boiled down the chaos, the late-night pivots, and the brilliant breakthroughs from the entire series into the 5 real-world patterns that actually work.

Here is your architectural blueprint for building the next generation of AI agents.

Trying to prompt a single, massive LLM to handle intent extraction, database retrieval, and stylistic reasoning all at once is a fast track to hallucinations and latency spikes. To scale, treat agents like microservices. Decompose complex problems into specialized sub-agents with tightly scoped prompts, managed by a supervisor agent that routes the traffic. We saw this pay off massive dividends in Episode 3, where Team Daniel and Luis ran tightly-scoped agents in parallel to crush their processing times from 1 hour down to just 10 minutes. As a bonus, this modular approach makes maintenance painless: if you need to introduce a new model or change a database schema, you only touch one sub-agent instead of risking the entire workflow.

The Multi-Agent Workflow Architecture:

During the e-commerce challenge, the goal was to close the "imagination gap" for retailers by building a virtual try-on experience using Gemini 2.0. The challengers built incredibly compelling, multi-step solutions in a brutal three-hour sprint. But here is the reality of AI engineering: little did we know, the first version of Nano Banana was just weeks away. Today, that same complex virtual try-on experience can be achieved in a single prompt. Developers must architect with a mindset of impermanence. You have to be okay with the fact that the complex agent harness you write today might be replaced in a few months—or even weeks—by advancements in state-of-the-art models. Build modularly so you can deprecate old code the moment native models catch up.

Agent Harness Diagram:

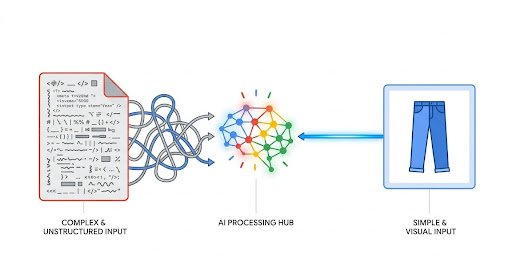

In Episode 3, we threw a curveball at the developers: they had to inject a multimodal experience into their existing agents. We quickly learned that a text recommendation like "you should wear blue jeans" fundamentally fails to solve real-world retail problems. The old cliché holds true: a picture is worth a thousand words—or in the world of AI, a much more accurate prompt. The best architectures moved beyond text by natively integrating multimodal models to ingest user photos, extract visual context, and dynamically trigger image-generation tools to render a composite visual. Treating multimodality as a native feature rather than an afterthought dramatically increases accuracy and creates a radically more organic user experience.

Multimodal Prompt vs Image input

Connecting to legacy banking systems or enterprise tools by writing custom API wrappers for every internal agent is a massive waste of time. The growing landscape of AI development is overloaded with alphabet soup: MCP, A2A, UCP, AP2, A2UI, and AG-UI and it is easy to feel like you're staring at a wall of competing standards. However, mastering this "alphabet soup" is what separates fragile prototypes from scalable production systems. By adopting open standards like the Model Context Protocol (MCP), your agents can dynamically discover resources via standardized "Agent Cards" and communicate using robust JSON payloads, saving you from writing and maintaining brittle integration code for every tool your agent touches.

To put this into practice today, try using the Agent Development Kit (ADK) to build a multi-step supply chain agent for a restaurant. A bare LLM will hallucinate, but as you layer in these open protocols one by one, your agent can suddenly check real inventory databases, communicate with remote supplier agents, execute secure transactions, and render interactive, streaming dashboards. Stop writing custom glue code and start leveraging open protocols to future-proof your multi-agent architecture.

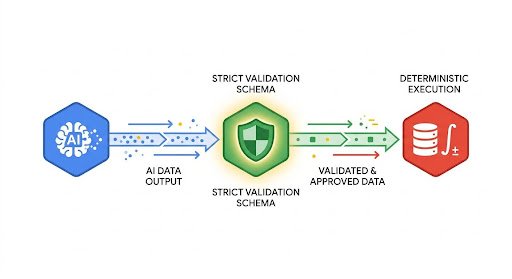

Developer Guide to AI Agent Protocols

Asking an LLM to directly calculate compound interest is practically a fireable offense—it is inherently probabilistic and will eventually hallucinate the math. During the legacy banking challenge, we saw teams try to let the AI do the mathematical heavy lifting on financial transactions, which immediately triggered massive validation errors. The winning pattern is simple: reserve the LLM strictly for reasoning and intent extraction. Use rigid JSON validation schemas (like Pydantic) to capture the model's output variables. Once the variables pass strict validation, hand them off to a traditional, 100% deterministic Python function or SQL query to actually execute the calculation or the database write. Let the AI do the thinking, but let your traditional code do the doing.

Intro to Controlled Generation with the Gemini API: Structured Output Google Collab

Building production-grade agents that are reliable, secure, and useful is a serious engineering challenge. As the AI Agent Bake-Off proved, the teams that succeeded weren't the ones with the most complex "God Prompts" or the flashiest single-shot demos. They were the ones who respected the fundamentals of rigorous software architecture.

If you are starting a build today, don't reinvent the wheel or write custom integrations from scratch. Grab the foundational architecture, load up your models, and start breaking your monolithic applications into specialized, deterministic, and highly capable micro-agents.

Get Started with Building Today using our Quickstart Resources today: