74 results

OCT. 15, 2025 / AI

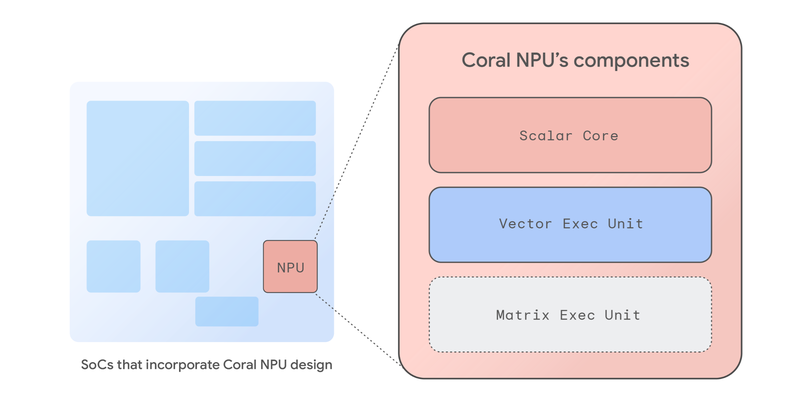

Coral NPU is a full-stack platform for Edge AI, addressing performance, fragmentation, and user trust deficits. It's an AI-first architecture, prioritizing ML matrix engines, and offers a unified developer experience. Designed for ultra-low-power, always-on AI in wearables and IoT, it enables contextual awareness, audio/image processing, and user interaction with hardware-enforced privacy. Synaptics is the first partner to implement Coral NPU.

OCT. 8, 2025 / Web

This guide shows you how to fine-tune the Gemma 3 270M model for custom tasks, like an emoji translator. Learn to quantize and convert the model for on-device use, deploying it in a web app with MediaPipe or Transformers.js for a fast, private, and offline-capable user experience.

SEPT. 30, 2025 / AI

Tunix is a new JAX-native, open-source library for LLM post-training. It offers comprehensive tools for aligning models at scale, including SFT, preference tuning (DPO), advanced RL methods (PPO, GRPO, GSPO), and knowledge distillation. Designed for TPUs and seamless JAX integration, Tunix emphasizes developer control and shows a 12% relative improvement in pass@1 accuracy on GSM8K.

SEPT. 29, 2025 / AI

EmbeddingGemma, built from Gemma 3, transforms text into numerical embeddings for tasks like search and retrieval. It learns through Noise-Contrastive Estimation, Global Orthogonal Regularizer, and Geometric Embedding Distillation. Matryoshka Representation Learning allows flexible embedding dimensions. The development recipe includes encoder-decoder training, pre-fine-tuning, fine-tuning, model souping, and quantization-aware training.

SEPT. 24, 2025 / Mobile

Google AI Edge provides the tools to run AI features on-device, and its new LiteRT-LM runtime is a significant leap forward for generative AI. LiteRT-LM is an open-source C++ API, cross-platform compatibility, and hardware acceleration designed to efficiently run large language models like Gemma and Gemini Nano across a vast range of hardware. Its key innovation is a flexible, modular architecture that can scale to power complex, multi-task features in Chrome and Chromebook Plus, while also being lean enough for resource-constrained devices like the Pixel Watch. This versatility is already enabling a new wave of on-device generative AI, bringing capabilities like WebAI and smart replies to users.

SEPT. 16, 2025 / AI

The Agent Development Kit (ADK) for Java 0.2.0 now integrates with LangChain4j, expanding LLM support to include third-party and local models like Gemma and Qwen. This release also enhances tooling with instance-based FunctionTools, improved async support, better loop control, and advanced agent logic with chained callbacks and new memory management.

SEPT. 9, 2025 / Mobile

Google AI Edge has expanded the Gemma 3n preview to include audio support. Users can play with it on their own mobile phone using the Google AI Edge Gallery, which is now available in Open Beta on Play Store.

SEPT. 4, 2025 / Gemma

Introducing EmbeddingGemma: a new embedding model designed for efficient on-device AI applications from Google. This open model is the highest-ranking text-only multilingual embedding model under 500M parameters on the MTEB benchmark, enabling powerful features like RAG and semantic search directly on mobile devices without an internet connection.

SEPT. 4, 2025 / AI

Learn how to use Google's EmbeddingGemma, an efficient open model, with Google Cloud's Dataflow and vector databases like AlloyDB to build scalable, real-time knowledge ingestion pipelines.

AUG. 14, 2025 / Gemma

Google's new Gemma 3 270M is a compact, 270-million parameter model offering energy efficiency, production-ready quantization, and strong instruction-following, making it a powerful solution for task-specific fine-tuning in on-device and research settings.