Building and deploying AI agents is an exciting frontier, but managing these complex systems in a production environment requires robust observability. AgentOps, a Python SDK for agent monitoring, LLM cost tracking, benchmarking, and more, empowers developers to take their agents from prototype to production, especially when paired with the power and cost-effectiveness of the Gemini API.

Adam Silverman, COO of Agency AI, the team behind AgentOps, explains that cost is a critical factor for enterprises deploying AI agents at scale. "We've seen enterprises spend $80,000 per month on LLM calls. With Gemini 1.5, this would have been a few thousand dollars for the same output."

This cost-effectiveness, combined with Gemini's powerful language understanding and generation capabilities, makes it an ideal choice for developers building sophisticated AI agents. "Gemini 1.5 Flash is giving us comparable quality to larger models, at a fraction of the cost while being incredibly fast," says Silverman. This allows developers to focus on building complex, multi-step agent workflows without worrying about runaway costs.

"We have seen individual agent runs with other LLM providers cost $500+ per run. These same runs with Gemini (1.5 Flash-8B) cost under $50."

– Adam Silverman, COO, Agency AI

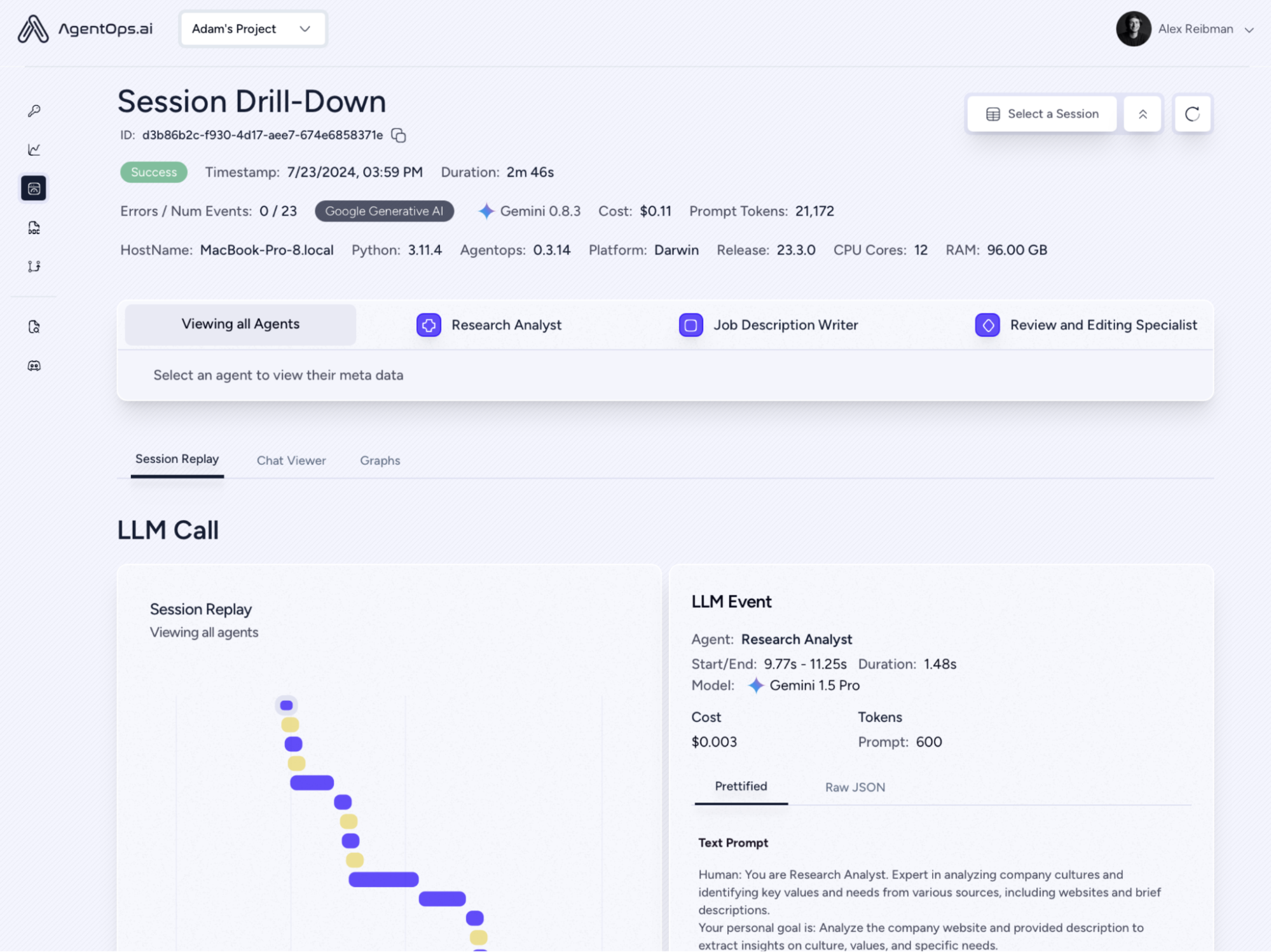

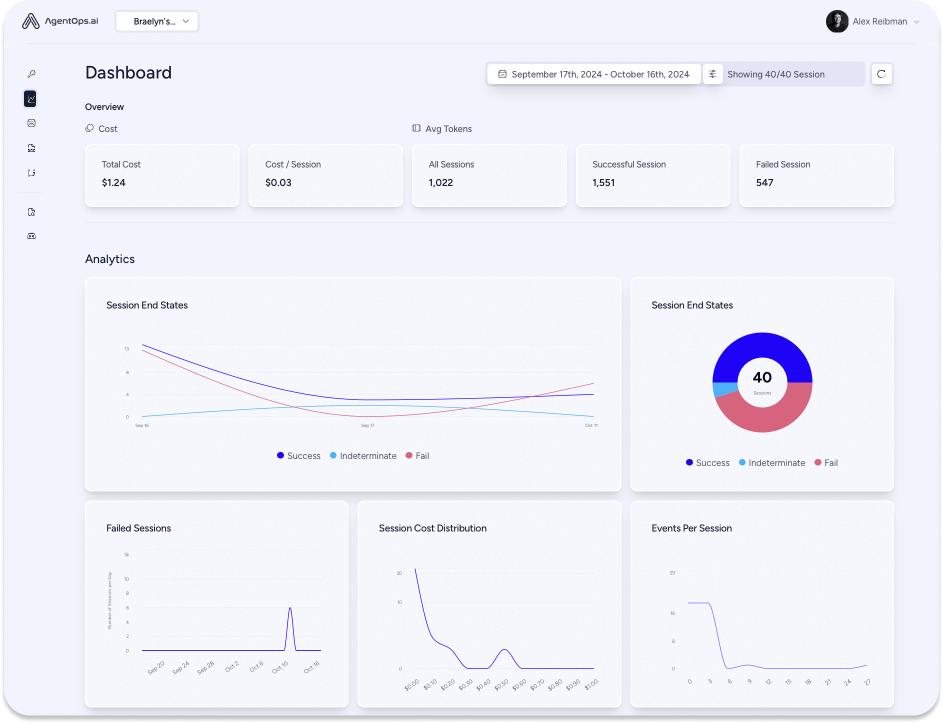

AgentOps captures data on every agent interaction, not just LLM calls, providing a comprehensive view of how multi-agent systems operate. This granular level of detail is essential for engineering and compliance teams, offering crucial insights for debugging, optimization, and audit trails.

Integrating Gemini models with AgentOps is remarkably simple, often taking just minutes using LiteLLM. Developers can quickly gain visibility into their Gemini APIcalls, track costs in real-time, and ensure the reliability of their agents in production.

AgentOps is committed to supporting agent developers as they scale their projects. Agency AI is helping enterprises navigate the complexities of building affordable, scalable agents, further solidifying the value proposition of combining AgentOps with the Gemini API. As Silverman emphasizes, "It is ushering more price-conscious developers to build agents."

For developers considering using Gemini, Silverman's advice is clear: "Give it a try, and you will be impressed."