Use compression to make the web faster

Every day, more than 99 human years are wasted because of

uncompressed content. Although support for

compression is a standard feature of all modern browsers, there are still many cases in which

users of these browsers do not receive compressed content. This wastes bandwidth and slows

down users' interactions with web pages.

Uncompressed content hurts all

users. For bandwidth-constrained users, it takes longer just to transfer the additional bits.

For broadband connections, even though the bits are transferred quickly, it takes several

round trips between client and server before the two can communicate at the highest possible

speed. For these users the number of round trips is the larger factor in determining

the time required to load a web page. Even for well-connected users these round trips often

take tens of milliseconds and sometimes well over one hundred milliseconds.

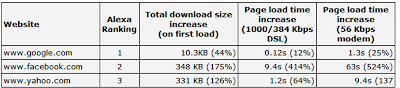

In Steve Souders' book

Even Faster Web Sites,

Tony Gentilcore presents data showing the page load time increase with compression

disabled. We've reproduced the results for three highest ranked sites from the Alexa

top 100 with permission here:

Data, with permission, from Steve Souders,

"Chapter 9: Going Beyond Gzipping," in Even Faster Web Sites

(Sebastapol, CA: O'Reilly, 2009), 122. The data from Google's web search logs show that the average page load time for users

getting uncompressed content is 25% higher compared to the time for users getting compressed

content. In a randomized experiment where we forced compression for some users who would

otherwise not get compressed content, we measured a latency improvement of 300ms.

While this experiment did not capture the full difference, that is probably because users

getting forced compression have older computers and older software.

We

have found that there are 4 major reasons why users do not get compressed content: anti-virus

software, browser bugs, web proxies, and misconfigured web servers. The first three

modify the

web request so that the web server does not

know that the browser can uncompress content. Specifically, they remove or mangle the

Accept-Encoding header that is normally sent with every request.

Anti-virus software may try to minimize CPU operations by intercepting and altering

requests so that web servers send back uncompressed content. But if the CPU is not

the bottleneck, the software is not doing users any favors. Some popular antivirus

programs interfere with compression. Users can check if their anti-virus software is

interfering with compression by visiting the

browser

compression test page at Browserscope.org.

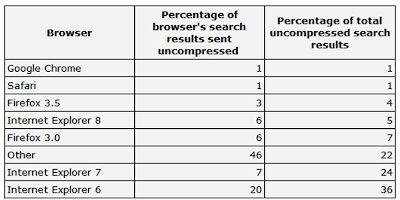

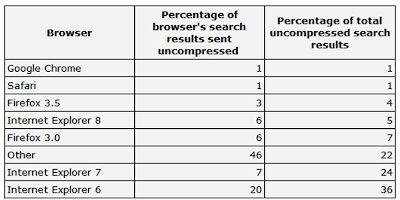

By default,

Internet Explorer 6 downgrades to HTTP/1.0 when behind a proxy, and as a result does not send

the Accept-Encoding request header. The table below, generated from Google's web search logs,

shows that IE 6 represents 36% of all search results that are sent without

compression. This number is far higher than the percentage of people using IE

6.

Data from Google Web Search

Logs

There are a handful of ISPs,

where the percentage of uncompressed content is over 95%. One likely

hypothesis is that either an ISP or a corporate proxy removes or mangles the Accept-Encoding

header. As with anti-virus software, a user who suspects an ISP is interfering with

compression should visit the

browser compression test

page at Browserscope.org.

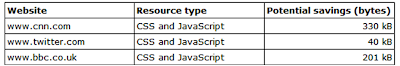

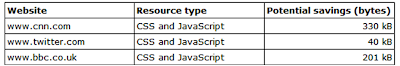

Finally, in many cases, users are

not getting compressed content because the websites they visit are not compressing their

content. The following table shows a few popular websites that do not compress all

of their content. If these websites were to compress their content, they could decrease the

page load times by hundreds of milliseconds for the average user, and even more for users on

modem connections.

To reduce uncompressed content,

we all need to work together.

- Corporate IT departments and

individual users can upgrade their browsers, especially if they are using IE 6 with a proxy.

Using the latest version of Firefox, Internet Explorer, Opera, Safari, or Google Chrome will increase the chances of getting

compressed content. A recent editorial in IEEE Spectrum lists additional reasons -

besides compression - for upgrading from IE6.

- Anti-virus software vendors

can start handling compression properly and would need to stop removing or mangling the

Accept-Encoding header in upcoming releases of their software.

- ISPs that

use an HTTP proxy which strips or mangles the Accept-Encoding header can upgrade, reconfigure

or install a better proxy which doesn't prevent their users from getting compressed

content.

- Webmasters can use Page Speed (or other similar tools) to check that the content

of their pages is compressed.

For more articles on speeding up the web,

check out

http://code.google.com/speed/articles/.

By Arvind Jain,

Engineering Director and Jason Glasgow, Staff Software Engineer